When using Ansible to deploy and manage EC2 auto scaling groups (ASGs) in AWS, you may encounter, like I have recently, an issue with idempotency errors that can be somewhat befuddling. Basically, when the ec2_asg module is called, one of its properties, vpc_zone_identifier, is used to define the subnets used by the ASG. A typical ASG configuration is to use two subnets, each one in a different availability zone, for a robust HA configuration, like so:

- name: "create auto scaling group"

local_action:

module: ec2_asg

name: "{{ asg_name }}"

desired_capacity: "{{ desired_capacity }}"

launch_config_name: "{{ launch_config }}"

min_size: 2

max_size: 3

desired_capacity: 2

region: "{{ region }}"

vpc_zone_identifier: "{{ subnet_ids }}"

state: present

Upon subsequent Ansible plays, when ec2_asg is called, but no changes are made, you can still experience a changed=true result because of how Ansible is ordering the subnet-id’s used in vpc_zone_identifier versus how AWS is ordering them. This makes the play non-idempotent. How does this happen?

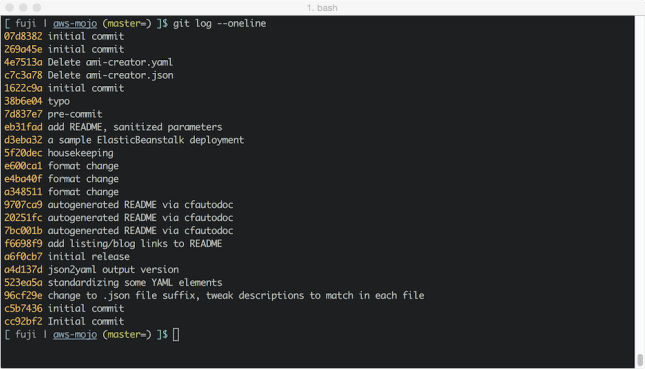

It turns out that Ansible’s ec2_asg module sorts the subnet-ids, while AWS does not when it returns those values. Here is the relevant code from the v2.3.0.0 version of ec2_asg.py, notice the sorting that happens in an attempt to match what AWS provides as an order:

518 for attr in ASG_ATTRIBUTES:

519 if module.params.get(attr, None) is not None:

520 module_attr = module.params.get(attr)

521 if attr == 'vpc_zone_identifier':

522 module_attr = ','.join(module_attr)

523 group_attr = getattr(as_group, attr)

524 # we do this because AWS and the module may return the same list

525 # sorted differently

526 if attr != 'termination_policies':

527 try:

528 module_attr.sort()

529 except:

530 pass

531 try:

532 group_attr.sort()

533 except:

534 pass

535 if group_attr != module_attr:

536 changed = True

537 setattr(as_group, attr, module_attr)

538

While this is all well and good, AWS does not follow any specific ordering algorithm when it returns values for subnet-ids in the ASG context. So, when AWS returns its subnet-id list for the ec2_asg call, Ansible will sometimes have a different order in its ec2_asg configuration and then incorrectly interpret the difference between the two lists as a change and mark it thusly. If you are counting on your Ansible plays to be perfectly idempotent, this is problematic. There is now an open GitHub issue about this specific problem.

The good news is that the latest development version of ec2_asg, which is also written using boto3, does not exhibit this false-positive idempotency error issue. The devel version of ec2_asg (i.e., unreleased 2.4.0.0) is altogether different than what ships in current stable releases. So, these false-positive idempotency errors can occur in releases up to and including version 2.3.1.0 (I have found it in 2.2.1.0, 2.3.0.0, and 2.3.1.0). Sometime soon, we should have a version of ec2_asg that behaves idempotently. But what to do until then?

One approach is to write a custom library in Python that you use instead of ec2_asg. While feasible, it would involve a lot of time spent verifying integration with both AWS and existing Ansible AWS modules.

Another approach, and one I took recently, is to simply ask AWS what it has for the order of subnet-ids to be in vpc_zone_identifier and then plug that ordering into what I pass to ec2_asg during each run.

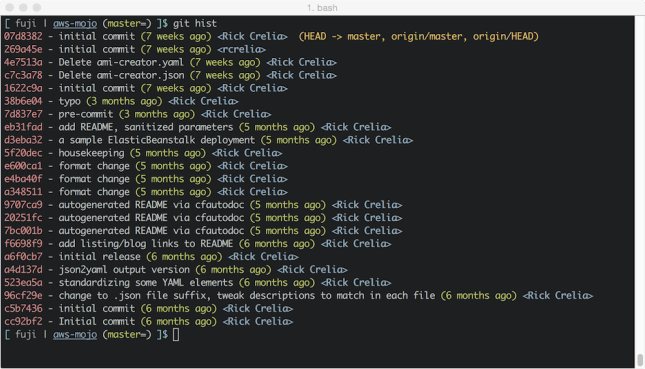

Prior to running ec2_asg, I use the command module to run the AWSCLI autoscaling utility and query for the contents of VPCZoneIdentifier. Then I take those results and use them as the ordered list that I pass into ec2_asg afterward:

- name: "check for ASG subnet order due to idempotency failures with ec2_asg"

command: 'aws autoscaling describe-auto-scaling-groups --region "{{ region }}" --auto-scaling-group-names "{{ asg_name }}" '

register: describe_asg

changed_when: false

- name: "parse the json input from aws describe-auto-scaling-groups"

set_fact: asg="{{ describe_asg.stdout | from_json }}"

- name: "get vpc_zone_identifier and parse for subnet-id ordering"

set_fact: asg_subnets="{{ asg.AutoScalingGroups[0].VPCZoneIdentifier.split(',') }}"

when: asg.AutoScalingGroups

- name: "update subnet_ids on subsequent runs"

set_fact: my_subnet_ids="{{ asg_subnets }}"

when: asg.AutoScalingGroups

# now use the AWS-sorted list, my_subnet_ids, as the content of vpc_zone_identifier

- name: "create auto scaling group"

local_action:

module: ec2_asg

name: "{{ asg_name }}"

desired_capacity: "{{ desired_capacity }}"

launch_config_name: "{{ launch_config }}"

min_size: 2

max_size: 3

desired_capacity: 2

region: "{{ region }}"

vpc_zone_identifier: "{{ my_subnet_ids }}"

state: present

On each run, the following happens:

- A command task runs the AWSCLI to describe the autoscaling group in question. If it’s the first run, an empty array is returned. The result is registered as asg_describe.

- The JSON data in asg_describe is copied into a new Ansible fact called “asg”

- The subnets in use by the ASG and how they are ordered is determined by extracting the VPCZoneIdentifier attribute from the AutoScalingGroup (asg fact). If it’s the first run, this step is skipped because of the when: clause which limits task execution to runs where the ASG already exists (runs 2 and later). It puts this list into the fact called “asg_subnets”

- Using the AWS-ordered list from step 3, Ansible sets a new fact called “my_subnet_ids”, which is then specified as the value to vpc_zone_identifier when ec2_asg is called.

I did a test on the idempotency of the play by running Ansible one hundred times after the ASG was created; at no point did I receive a false-positive change. Prior to this workaround, it would happen every run if I happened to be specifying subnet-ids ordered differently than from what AWS returned in terms of their order.

While this is admittedly somewhat kludgy, at least I can be confident that my plays involving AWS EC2 autoscaling groups will actually behave idempotently when they should. In the meantime, while we wait for the next update to Ansible’s ec2_asg module, this workaround can be used successfully to avoid false positive idempotency errors.

Until next time, have fun getting your Ansible on!